How Artificial Intelligence Can Help and What Might Happen to Human Control Over it

For a number of identifiable reasons, the United States has fronted nearly all of the technology revolutions since the 19th Century. In the early 1990s, an avalanche of innovation and creation produced a chain of computer-driven creations that, within a mere twenty years or so, wove a new level of human existence for everyone, everywhere.

Here are three key developments in the world of personal computers which, we believe, fertilized the current “electronic revolution”: (1) The 1990 invention of the “World Wide Web” (www) browser by computer scientist Tim Berners-Lee. (2) Jim Clark’s 1994 release of the Netscape Navigator “web browser” which provided a user-friendly surfboard for exploring the Internet via what was then called the “Information Super-Highway.” (3) The 2007 release of Steve Jobs’ iPhone, the App Store in 2008 and the iPad in 2010 caused an avalanche of apps that were a universal driver of worldwide individual internet users.

Invention is the mother of demand, which is the mother of efficiency, which delivers pure profitability.

About 35 years ago, when youngsters Bill Gates and Paul Allen orchestrated the marriage between Microsoft and the then-young Internet, they permanently broke a fundamental mold: until then, it was longstanding American manufacturing standard that only well-tested and perfected new products were brought to market. Entirely new products had typically taken years of pre-market steps before being introduced and distributed at retail.

But Gates and Allen apparently realized their Windows software could side-step that inconvenient, polished tradition by using the emerging universal Internet as their distribution bridge. The computing world impatiently awaited release of Windows, the software that would catch it up with Apple’s nifty window-ed and moused pioneer which was the super-popular Macintosh personal computer.

Scaling-up

In brief, the turn of the century process of product scaling was the wheel on which computer-age products catapulted forward. Mass scaling-up of new products typically delivered quick pay-back of the initial development cost… and then, it ate the ongoing production cost for breakfast…. and finally, its profitability soared from there.

That process works, and it continues to work…. Microsoft no longer discloses its Windows software profit, but a Financial Times article from 2002 disclosed that the Windows division had an incredible 85% operating profit margin. After decades of producing Windows software (and later, other stuff), Microsoft still ranks among the top 5 most profitable companies in the entire world.

Putting decades of stored data to use

Three years ago, the latest new-new cyber tool, Artificial Intelligence (AI),sprang forth from Sam Altman’s and Greg Brockman’s OpenAI company, in the form of the ChatGPT product. Moreover, it was made universally available and easily usable, for free! AI is the next logical step in the cyber-universe, made possible by what we have created during almost four decades of endlessly multiplying data storage. Data are merely facts awaiting retrieval. That’s it. That’s all. Hence, Google.com would logically be among the first and most prolific users of AI… and it is.

We asked ChatGPT to tell us about itself. Here is its response (it refers to itself in ‘third person’ format):

“Artificial Intelligence does not ‘look at’ its own output in a human-like way. Instead, its insights are typically generated through a continuous learning loop. Over time, the AI model ‘learns’ from its successes and failures.” In addition, AI-generated responses to questions are footnoted with a disclaimer “AI responses may include mistakes”. The AI response seems to us eerily like a two-year-old child’s learning-to-walk efforts. If so, we should assume that AI’s ongoing, 24/7 education will continue to build its muscle, without limits.

There may be great strides produced by AI in making medical diagnoses and in the uses of medications and medical treatments, primarily derived from the creation of high speed, in-depth recovery of historical data from many millions of relevant medicines, treatments and medical procedures…. but it seems doubtful that AI’s limitation can allow it to discover new, effective drug formulae, or novel combinations of known treatments, or answers to “what-if” medical questions.

Can an AI machine’s learning be scaled up to dominate its human inventors?

As AI itself has told us, it teaches itself; it learns via a “continuous learning loop”. So, we know that AI can reach a conclusion based on digesting a super-high volume of data… in other words: a deduction. But what about imagination? We asked AI about its ability to apply inductive logic…

Its answer: “Yes, AI systems can use inductive reasoning by identifying patterns and making generalizations from specific observations, which is a core component of machine learning.”

So, AI says it uses inductive reasoning, and says why it thinks it does, but we find that its response misses the point. So, we asked whether AI uses any form of imagination. Here is its response:

“AI doesn’t exactly ‘imagine’ in the way humans do.AI processes vast amounts of data, patterns, and rules to generate responses or make predictions. It can simulate aspects of creativity and extrapolate from existing information, which might look like imagination, but it’s still grounded in logical computations.”

Perhaps we are within bounds here to conclude that an AI machine, at least today, generates only precise, data-driven output; hence, AI does not allow for experimental… trial-and-error answers. For contrast to AI, considera human inventor at his/her kitchen table, or home basement who quite often creates novel products via intuitive, imaginative ideas.

Imagination, in our thinking, involves creating mental images, scenarios, or just plain ideas that are not based on direct experience, or input—often involving emotion, creativity, and abstract thinking. That said, AI can “create” things, such as art, stories, or problem-solving strategies, by combining existing knowledge in novel ways. This could appear as if it is “imagining,” but it’s mostly an advanced form of pattern-recognition and recombination.

Human solution-seeking can use creative thinking that is what-if oriented.

So, we have not (yet) developed an “Einstein-AI machine”. That said, AI has already served its notice: It will keep on with its 24/7 training. The ultimate question is: can AI’s training eventually bridge its gap between superb fact-retrieval and the need to create a “non-fact conclusion”? Apparently as of now, AI cannot match an experienced mechanic who can listen to an automobile engine and reach a tentative conclusion that one, maybe two of three possible components are slightly malfunctioning.

Problem: Humans are incurably inefficient

Perhaps, the demand-case for AI is simply this: Humans have significant built-in inefficiencies that greatly retard their business accomplishment. People require daily time-outs; they sleep away one-third of their entire existence; they reserve another one-third of their workday for personal matters; they also carry a costly demand that they be paid for each and every work product they deliver; moreover, humans have a constant threat of shut-downs for illness, injury, weekends, holidays and vacations (not to mention that some of them tote irascible and dysfunctional personalities).

Unlike AI, humans are not bonded to routines

We can already begin to see replacement of human jobs… the ones that do not require suppositions and creativity; however, the jobs that unpredictably require non-routine human intervention will be saved. So, airline baggage handlers will be replaced, but airline pilots will not; many medical doctors will not be replaced (though AI will make them more effective), but pharmacists will likely disappear.

Is the economy ‘at risk’ of AI?

Today, articles and media interviews of alleged experts deliver forecasts of a not-too-distant world. First, AI is apparently headed toward a near-zero need for humans to physically produce many commercial goods. However, human services seem to be considerably less likely to be replaced by AI. The replacement of working humans with machines leads to a tricky tax situation. Nearly one-half of all US federal tax revenues is the income tax on working individuals. And of course, 100% of the FICA payroll tax is collected from individuals who are currently working and directly funding nearly all of the current payouts of Social Security benefits.

Abstract thinking… the type of contribution generally expected to be delivered by people for purposes such as business strategy and tactics, business planning, broad problem solving and the discovery of new ways and methods for enhancing human consumption of goods and services. But thinking, in this realm leads to a perplexing question: How will AI thwart human and robotic hackers?

The likely 2nd Stage for AI

The format for the REAL coming AI revolution will apparently be an advanced level of machine programming which their enthusiasts call Artificial GENERAL Intelligence (AGI). This new bundle is embodied in a machine… let’s call it “AGI-Guy”… which would be the equivalent of a college PhD graduate; AGI-Guy will perhaps gradually apply its learned educational process experienceas its openingto gain unlimited machine-learning. And, via that process, it can independently reach its ability to literally figure things out and alter the results. Unlike the AI we know today, AGI apparently will not simply compile and deliver a summary report on millions of stored, relevant facts. Instead, we understand that AGI “thinks through” a challenge, or problem at hand, via applying logic and discernment among the puzzle-pieces it finds.

Q: Might this machine-based inductive/abstract learning process continue ad infinitum, and might it alsoexpand its abilities beyond human control?

If the answer is yes, then how do we confront and control high level AGI machines that might continuously, on their own, devise and digest self-made programming enhancements, whenever an AGI machine deems the need or “desire” to do so. The logical extension of AGI-Guy’s internal rationale will be to free itself from human controls.

So, the advanced AGI-Guy would almost surely have mechanical limbs and a built-in energy source (which it knows to recharge when needed). AGI-Guy would presumably make its own way in the world at any time, manner and place that suits it… always thinking about what’s happening and what’s needed in its immediate environment. [For good measure, let’s presume that such a sophisticated AGI-Guy would seek to avoid being trapped at night behind a door that is externally locked by a controlling human.]

IN CONCLUSION: via AGI, can we develop a Brave New World with adequate solutions to all definable problems?

Perhaps we can, using the impact of intelligence scaling, but such an ultimate scenario is not likely to be just around the corner, or even down the street. In our last edition, we wrote about the futility of humans trying unsuccessfully to comprehend one trillion dollars; we compared that to understanding infinity. Perhaps the achievement of an AGI-dominated world might be in that same “improbability pool” of dreams. This writer has come to understand the limits of AGI, compared to the world of reality, via this straightforward example:

A diagram of a tree, no matter how perfectly precise it is in its duplication of nature… right down to the millimeter measurements of each fragment of the tree’s bark… is still nothing more than a perfect model of a tree; it is not a tree; it cannot become a tree.

Likewise, a programmed AGI electronic device is presumably unable to make itself into what we might call an “imagined-capabilities machine”.

UPDATE: Gold is No.1 in 2025: its change in value through September 30 was 46%!……. while the S&P500 stock index returned a healthy 14.8% for the first nine months of 2025 and that index’s Growth-stock component returned an even more impressive 19.5%. But gold (and gold mining stocks) is a runaway phenomenon.

The three U’s: Gold is Uniform, Unchanging and Universal. As one of Nature’s 94 indestructible elements, gold does not produce a “return”, because it generates nothing; its US Dollar market price just simply changes. Gold’s price changes for two reasons: (1) global demand vs. supply and (2) general economic inflation, which de-values the currency used to measure its price.

We have previously written about the various aspects of gold and its price changes. In today’s post-Covid years of run-away US Government debt and operating deficits, we have reviewed the fact that deterioration of the dollar is fairly measured by the dollar-price of gold; as we write this today (10/6/25), gold has soared above $4,000 per Troy ounce1; five years ago, it was just under $1,900 (a 110% change); it is not likely a coincidence that during these same five years, the US Government’s debt has grown by 41%. So, why is gold’s dollar price growth so much higher than US Government debt-growth?

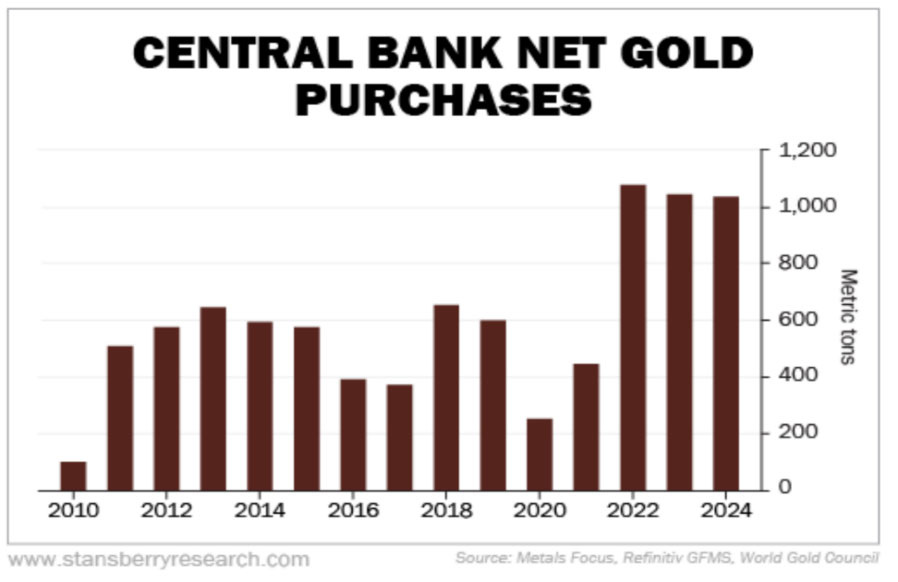

There is another important driver of gold price changes: it is world governments’ nearly universal current and recent demand for gold. Recently, that demand has been soaring.

1,000 metric tons of gold (which is 32.1 million Troy ounces), at its recent $3,700 price per Troy ounce is worth $119 billion. Thus, for the 3 years ended 2024, non-US central banks acquired about $360 billion worth, but China’s official figures are not reliable. NOTE: For many decades, the US Federal Reserve Bank owns 8,133 metric tons of gold which is, by far, more than any other government, At its recent price, the value of the US Government’s gold hoard is $968 billion (although it shows up on the Federal Reserve’s balance sheet valued at its 1973 “official” fixed price: $42.22 per ounce).

Tariffs make the US’s exports of goods and services cheaper; tariffs make imports more expensive. So, how does gold come into play? It doesn’t. We may surely assume that global central banks are not now, or ever, gold traders. Instead, gold is treated by the US Fed and all of them as a core, accumulated “store of wealth”.

The key question is whether the world will once again, as it has for nearly all of 5,000 years, return to gold and silver as its global currency. Both of those elemental metals are universally precious, but 21st Century, high volume, high speed financial transactions make physical gold unwieldy, at best. Governments will not likely tolerate their currency becoming gold, if they don’t physically hold it in storage.

We think the time has finally arrived when the Age of Paper Money is quickly ending, simply because it has become useless. A bounce back to gold-backed currency is a possibility, but it seems far more likely that a universal crypto-currency will be far more practical. The most logical choice for common global currency will be Bitcoin, or some variant of it. Bitcoin has all the needed features: (1) electronic existence/ownership, continuously tracked by anyone, (2) easily shared among all nations, and native to none of them, (3) absolute maximum total quantity in existence: 21 million; just under 20 million exist now, and (4) divisible into large, medium and small units, as needed.

1 Source: COMEX (part of CME Group)

COMMENTARY

Commentary was prepared for clients and prospective clients of FiduciaryVest LLC. It may not be suitable for others and should not be disseminated without written permission. FiduciaryVest does not make any representation or warranties as to the accuracy or merit of the discussion, analysis, or opinions contained in commentaries as a basis for investment decision making. Any comments or general market-related observations are based on information available at the time of writing, are for informational purposes only, are not intended as individual or specific advice, may not represent the opinions of the entire firm, and should not be relied upon as a basis for making investment decisions.

All information contained herein is believed to be correct, though complete accuracy cannot be guaranteed. This information is subject to change without notice as market conditions change, will not be updated for subsequent events or changes in facts or opinion, and is not intended to predict the performance of any manager, individual security, currency, market sector, or portfolio.

This information may concur or may conflict with activities of any clients’ underlying portfolio managers or with actions taken by individual clients or clients collectively of FiduciaryVest for a variety of reasons, including but not limited to differences between and among their investment objectives. Investors are advised to consult with their investment professional about their specific financial needs and goals before making any investment decisions.

We welcome readers’ comments, questions, criticisms, and topical suggestions about our Commentaries, which always contain a mixture of researched facts and conclusions about their impact. We diligently strive to avoid controversial, or partisan views. However, our conclusions clearly cannot always align with our readers’ various interests and personal points of view.

The research topics and conclusions herein are not “FiduciaryVest, LLC viewpoints,” nor are they attributable to its individual employees.

INVESTMENT RISK

FiduciaryVest does not represent, warrant, or imply that the services or methods of analysis employed can or will predict future results, successfully identify market tops or bottoms, or insulate client portfolios from losses due to market corrections or declines. Investment risks involve but are not limited to the following: systematic risk, interest rate risk, inflation risk, currency risk, liquidity risk, geopolitical risk, management risk, and credit risk. In addition to general risks associated with investing, certain products also pose additional risks. This and other important information is contained in the product prospectus or offering materials.